Thought Leadership

AI Agent Architecture Blueprint

AI Agent Architecture Blueprint

Technical assembly of LLMs, Vector DBs, RAG pipelines & Autonomous Workflows

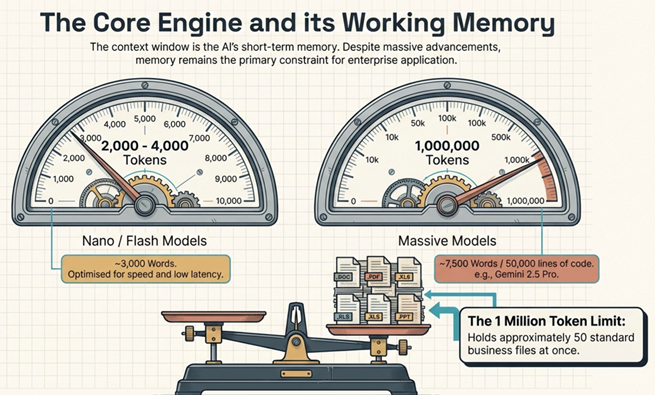

1. The Brain: Understanding the Context Window

Every Agent starts with a Large Language Model (LLM). However, the “Short-Term Memory” of that model is its Context Window.

- The Limit: Even powerful models like Gemini 1.5 Pro (1M+ tokens) have limits. You can’t feed a 500GB company database into a single prompt.

- The Strategy: Choosing the right model (Flash/Nano for speed vs. Pro for deep reasoning) is your first architectural decision.

2. The Memory: Embeddings & Vector Databases

To handle massive data Agents use Embeddings, converting words into mathematical vectors that represent meaning rather than just keywords

- Semantic Search

- The Stack: Tools like Pinecone or ChromaDB act as the external long-term memory for your agent.

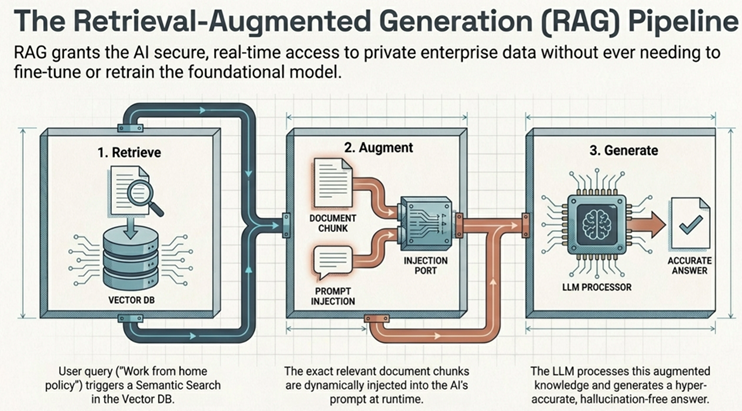

3. The Strategy: Retrieval Augmented Generation (RAG)

RAG is the “secret sauce” that prevents AI hallucinations. It follows a three-step flow:

- 1. Retrieval: Finding relevant document chunks in the Vector DB.

- 2. Augmentation: Injecting that specific data into the prompt.

- 3. Generation: The LLM answers based only on the provided facts.

4. The Skeleton: LangChain & Orchestration

Building everything from scratch is inefficient. LangChain acts as the abstraction layer, allowing you to:

- Switch LLM providers (OpenAI to Anthropic) with one line of code.

- Manage “Chains” of events automatically.

- Standardize how the agent uses memory and tools.

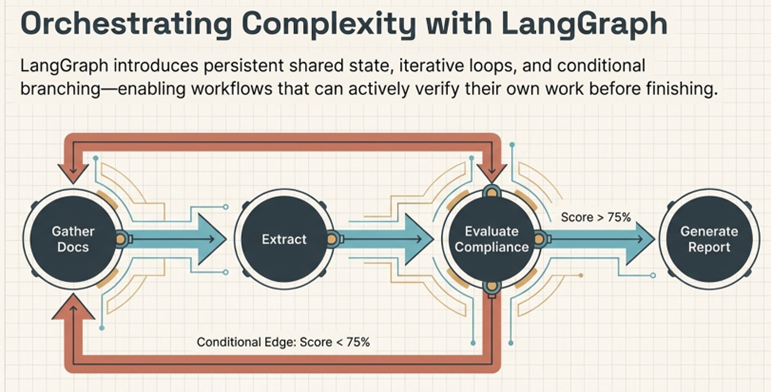

5. The Nervous System: LangGraph for Complex Workflows

Standard “Chains” are linear, but real business logic is loopy and conditional. LangGraph introduces:

- Nodes:Individual units of work.

- Edges:The paths between nodes, including conditional branching .

- State:A shared “memory” that persists across the entire multi-step process .

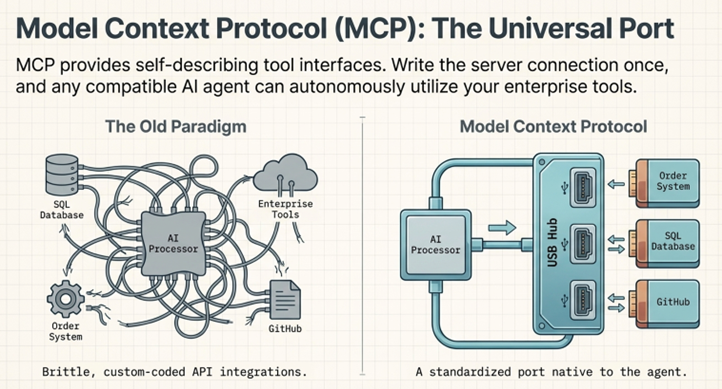

6. The Hands: Model Context Protocol (MCP)

How does an agent actually do things? Through MCP. Think of it as a universal USB port for AI.

- It allows agents to connect to external SQL databases, Slack, GitHub, or local files autonomously.

- Benefit:Instead of writing custom APIs for every tool, you use self-describing interfaces that the AI understands how to use on its own.

7. The Voice: Prompt Engineering

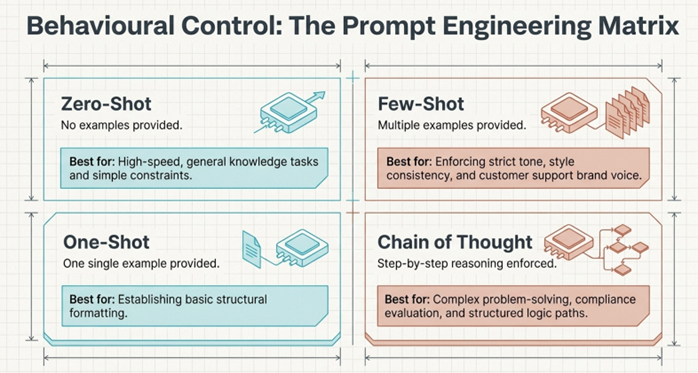

The way you “talk” to the agent determines its success. Key techniques include:

- Few-Shot:Giving the agent 2-3 examples of the desired output style.

- Chain of Thought:Explicitly telling the agent to “think step-by-step” before answering.

The Result?

By combining these layers, companies are moving from long Manual process tasks to few minutes with 24/7 availability.

Are you building agents for your workflow yet?

Get in touch today to discover how CubeMatch can support your business https://cubematch-144313631.hs-sites-eu1.com/get-in-touch

// EXPLORE Thought Leadership

Every change starts with a conversation.

Let's chat about how CubeMatch can drive your transformation.

Get in touch to see how we can work together to make a difference for your business.